After more than 13 years, and tens of thousands of questions, Moz Q&A closed on 12th December 2024. Whilst we’re not completely removing the content - many posts will still be possible to view - we have locked both new posts and new replies. More details here.

Unsolved Google Search Console Still Reporting Errors After Fixes

-

Hello,

I'm working on a website that was too bloated with content. We deleted many pages and set up redirects to newer pages. We also resolved an unreasonable amount of 400 errors on the site.

I also removed several ancient sitemaps that listed content deleted years ago that Google was crawling.

According to Moz and Screaming Frog, these errors have been resolved. We've submitted the fixes for validation in GSC, but the validation repeatedly fails.

What could be going on here? How can we resolve these error in GSC.

-

Here are some potential explanations and steps you can take to resolve the errors in GSC:

Caching: Sometimes, GSC may still be using cached data and not reflecting the recent changes you made to your website. To ensure you're seeing the most up-to-date information, try clearing your browser cache or using an incognito window to access GSC.

Delayed Processing: It's possible that Google's systems have not yet processed the changes you made to your website. Although Google typically crawls and indexes websites regularly, it can take some time for the changes to be fully reflected in GSC. Patience is key here, and you may need to wait for Google to catch up.

Incorrect Implementation of Redirects: Double-check that the redirects you implemented are correctly set up. Make sure they are functioning as intended and redirecting users and search engines to the appropriate pages. You can use tools like Redirect Checker to verify the redirects.

Check Robots.txt: Ensure that your website's robots.txt file is not blocking Googlebot from accessing the necessary URLs. Verify that the redirected and fixed pages are not disallowed in the robots.txt file.

Verify Correct Domain Property: Ensure that you have selected the correct domain property in GSC that corresponds to the website where you made the changes. It's possible that you might be validating the wrong property, leading to repeated failures.

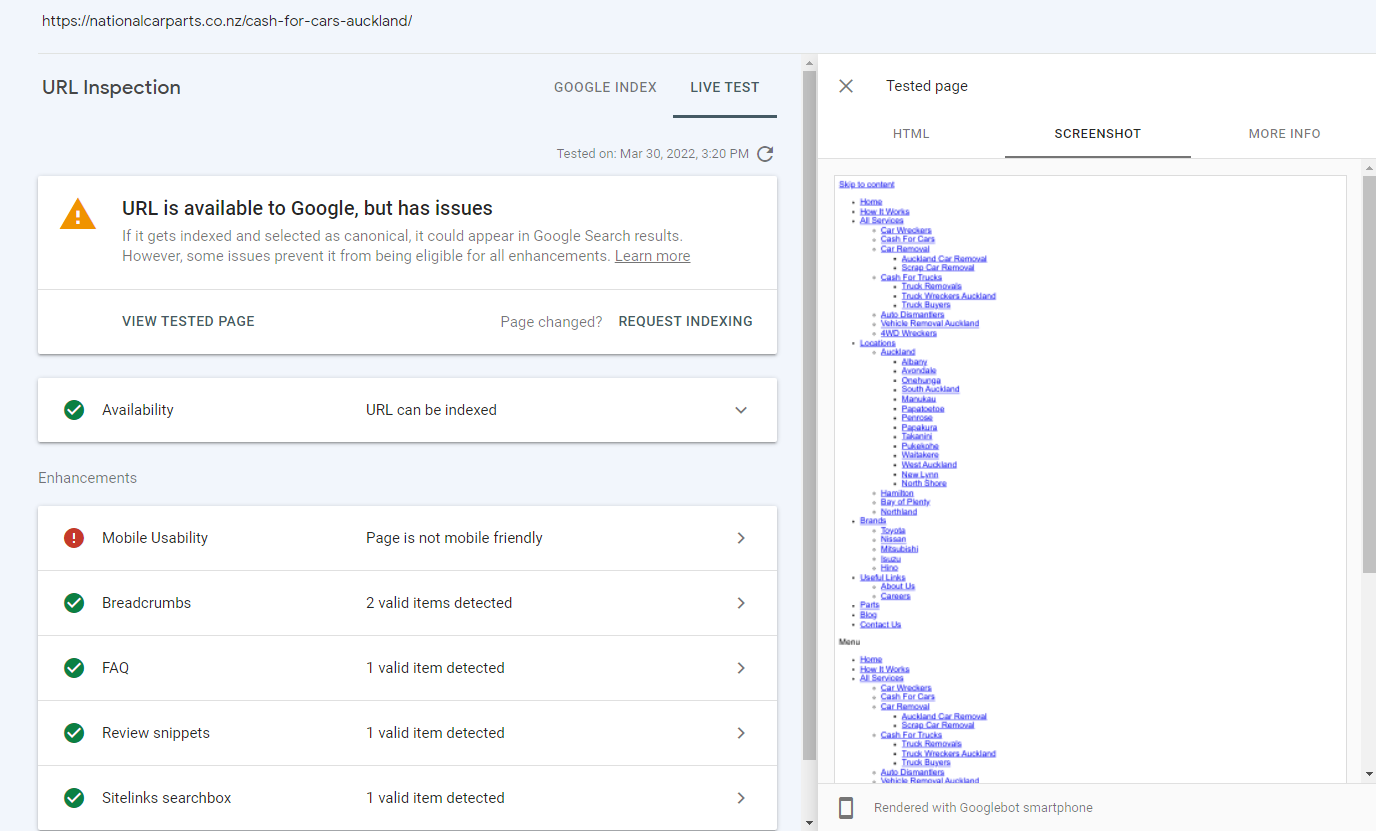

Inspect URL Tool: Utilize the "Inspect URL" tool in GSC to manually check specific URLs and see how Google is currently processing them. This tool provides information about indexing status, crawling issues, and any potential errors encountered.

Re-validate the Fixes: If you have already submitted the fixes for validation in GSC and they failed, try submitting them again. Sometimes, the validation process can encounter temporary glitches or errors.

If you have taken the appropriate steps and the validation failures persist in GSC, it may be worth reaching out to Google's support team for further assistance. They can help troubleshoot the specific issues you are facing and provide guidance on resolving the errors.

-

Facing the same error with redirects even after the fix on our website https://ecomfist.com/.

-

@tif-swedensky It usaly takes bettwen a week to 3month to show the right results do not worry about that if you fixed good to go

-

Hi! Google Search Console has this issue, I would recommend not to pay much attention to it. If you know that everything's correct on the website, than you don't need to worry just because of Search Console issues.

-

In this case, it's likely that the Google bots may have crawled through your site before you fixed the errors and haven't yet recrawled to detect the changes. To fix this issue, you'll need to invest in premium SEO tools such as Ahrefs or Screaming Frog that can audit your website both before and after you make changes. Once you have them in place, take screenshots of the findings both before and after fixing the issues and send those to your client so they can see the improvements that have been made.

To give you an example, I recently encountered a similar issue while working with a medical billing company named HMS USA LLC. After running some SEO audits and making various fixes, the GSC errors had been cleared. However, it took a few attempts to get it right as the changes weren't detected on the first recrawl.

Hopefully, this information is useful and helps you understand why your GSC issues may still be showing up after being fixed. Good luck!

-

Hi,

We have had the similar problem before. We are an e-commerce company with the brand name VANCARO. As you know the user experience is very important for an e-commerce company. So we are very seriouse about the problems reported by GSC. But sometimes the update of GSC may be delayed. You need to observe a little more time. Or I can share you anoter tool : https://pagespeed.web.dev/. Hope it can help you.

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Explore more categories

-

Chat with the community about the Moz tools.

-

Discuss the SEO process with fellow marketers

-

Discuss industry events, jobs, and news!

-

Chat about tactics outside of SEO

-

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

-