After more than 13 years, and tens of thousands of questions, Moz Q&A closed on 12th December 2024. Whilst we’re not completely removing the content - many posts will still be possible to view - we have locked both new posts and new replies. More details here.

Google News and Discover down by a lot

-

Hi,

Could you help me understand why my website's Google News and Discover Performance dropped suddenly and drastically all of a sudden in November? numbers seem to pick up a little bit again but nowhere close what we used to see before then -

We worked with a content site years ago that focused mostly on superhero movie news and a lot of their traffic came from Google News. One day it just dried up and dropped by more than 90%. They brought us on to help and what we figured out was they were feeding Google their sponsored posts for months. The posts were made to look like news but were very clearly sponsorships pushing product so they got hit with a penalty. Not sure if that is what is going on with you but it's food for thought. As Tom mentioned, they are very volatile channels.

-

@tom-capper Thanks for the answer Tom. Much appreciated!

-

Hi, sorry for super slow reply.

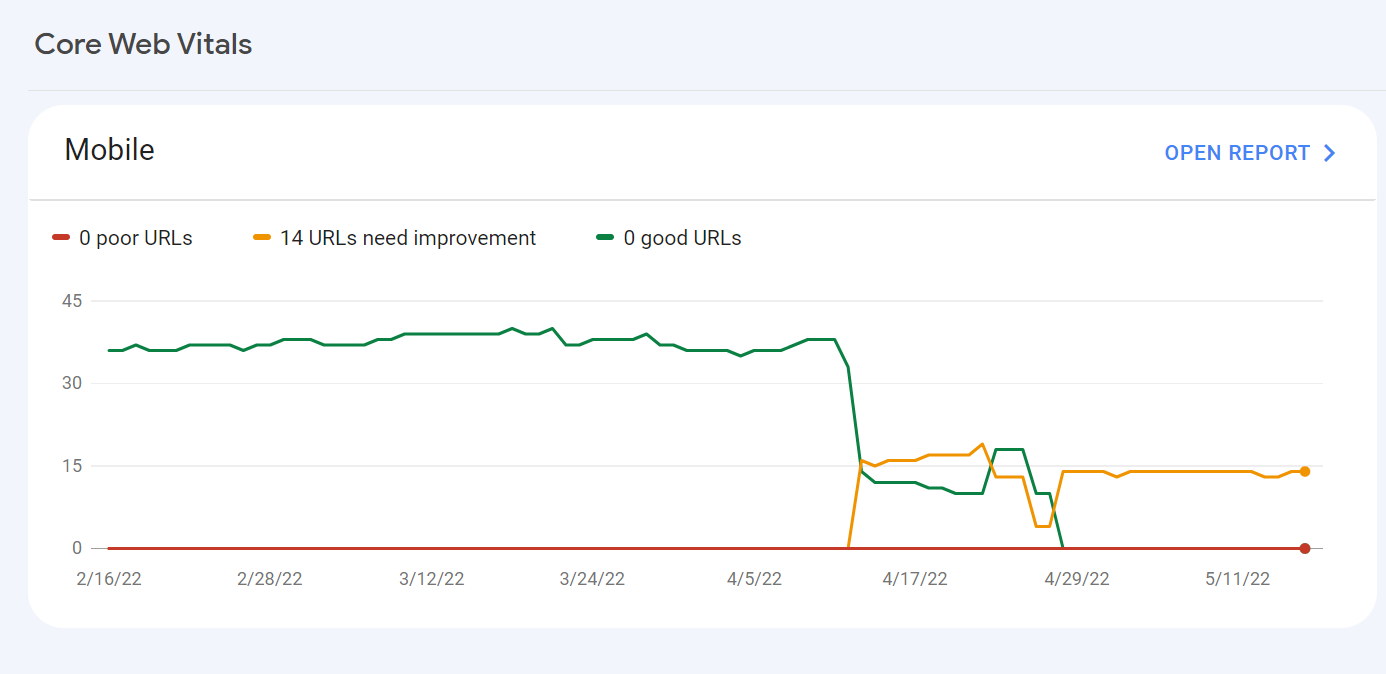

These are, unfortunately, highly volatile channels. There was a core update in November, and this can have an even larger update on Discover than organic.

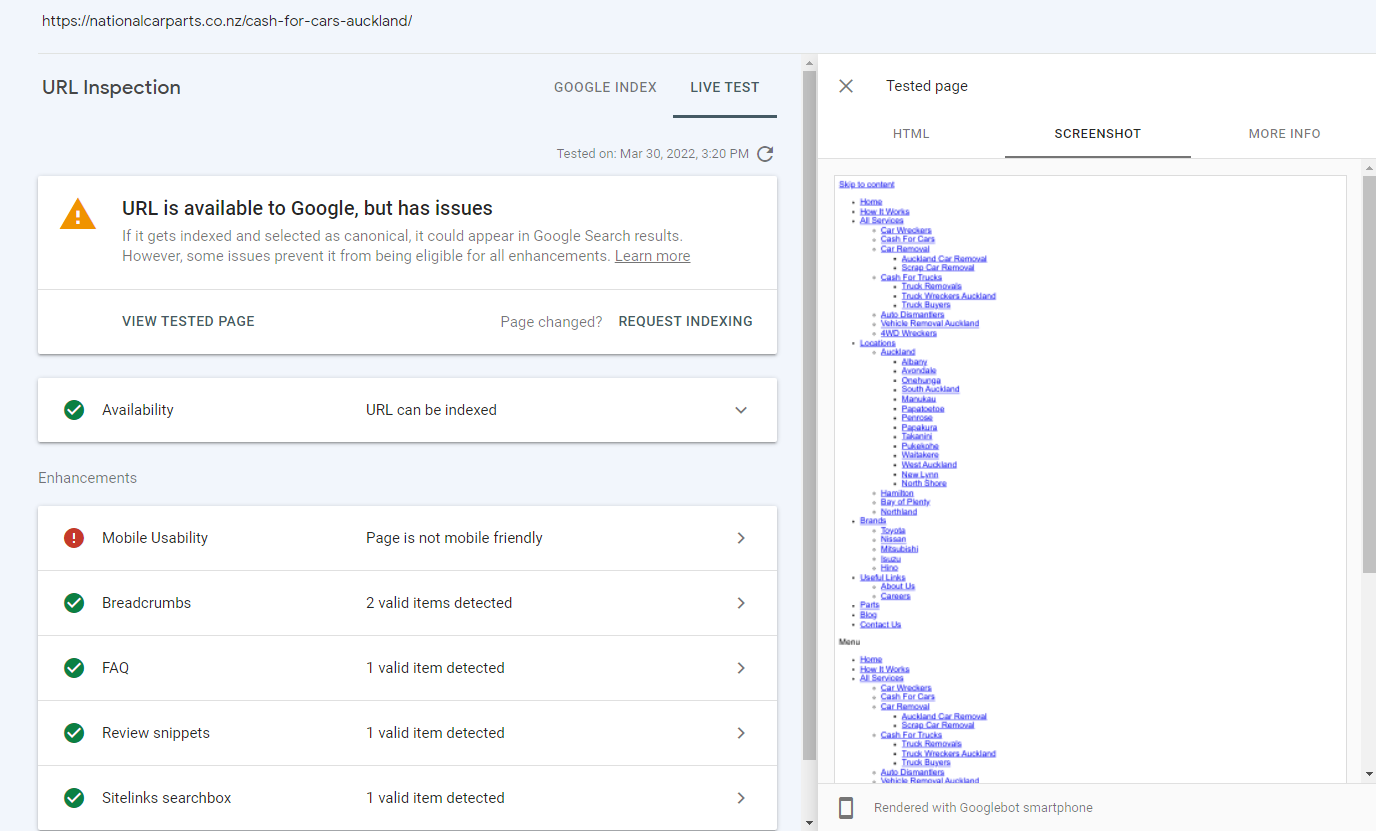

All you can really do is check how you perform vs competitors on the issues that Google claims to be valuing - brand trust, site speed, content quality/depth, and so on. Google Discover is particularly sensitive to speed.

Of course, you should also check for any technical hitch with your own site that might be causing issues.

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Explore more categories

-

Chat with the community about the Moz tools.

-

Discuss the SEO process with fellow marketers

-

Discuss industry events, jobs, and news!

-

Chat about tactics outside of SEO

-

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

-