After more than 13 years, and tens of thousands of questions, Moz Q&A closed on 12th December 2024. Whilst we’re not completely removing the content - many posts will still be possible to view - we have locked both new posts and new replies. More details here.

Is Google suppressing a page from results - if so why?

-

UPDATE: It seems the issue was that pages were accessible via multiple URLs (i.e. with and without trailing slash, with and without .aspx extension). Once this issue was resolved, pages started ranking again.

Our website used to rank well for a keyword (top 5), though this was over a year ago now. Since then the page no longer ranks at all, but sub pages of that page rank around 40th-60th.

- I searched for our site and the term on Google (i.e. 'Keyword site:MySite.com') and increased the number of results to 100, again the page isn't in the results.

- However when I just search for our site (site:MySite.com) then the page is there, appearing higher up the results than the sub pages.

I thought this may be down to keyword stuffing; there were around 20-30 instances of the keyword on the page, however roughly the same quantity of keywords were on each sub pages as well.

I've now removed some of the excess keywords from all sections as it was getting in the way of usability as well, but I just wanted some thoughts on whether this is a likely cause or if there is something else I should be worried about.

-

Technically the disavow acts like a nofollow, so unless you think they might turn into "followed" at some point, you do not need to disavow them.

It can take 6+ months for a disavow to take effect too. So if it was submitted only recently, it might need some more time.

-

Unfortunately I've already been through the Webmaster Tools links and disavowed hundreds of domains (blog comment spam primarily).

I did overlook any press releases though, whether hosted on PRWeb or picked up on other sites, so the question remains should these be disavowed despite the fact they are no follow links?

-

Hi - I would recommend using webmaster tools in addition to Moz to check for backlinks. There are likely more links in there that OSE does not have.

What I usually do is pull the links from there, and crawl them with Screaming Frog (as some may be old and are now gone). There's a really good process for going through links here: http://www.greenlaneseo.com/blog/2014/01/step-by-step-disavow-process/ - although it's for disavowing in the article, you can use the process to find bad links for any situation.

-

There are only 7 external, equity passing links to this page - none of which use exact match anchor text.

There are also internal links; 284 banner links pointed to the page until last week (the same banner appeared on each page, hence the number) with the img alt text "sage erp x3 energise your business". In addition there are links throughout the site that feature the exact match anchor text - it is in the nav on every page for example. Im not sure if Google would take this into account, in my opinion is shouldn't as it is natural for on-site links to be exact match, unlike off site links.

That leaves the PRWeb articles, hosted on the PR web site and on sites that picked up the article, which are all no follow with exact match anchor text.

The only other thing I can think of, which I mentioned previously, is that there are multiple valid URLs for each page (with and without www, with and without .aspx, etc) -this bumps up the number of internal links, increases number of pages that can be indexed, could trigger duplicate content issues and 'water down' seo juice.

-

It's possible, although I would definitely look into any followed links that are of low quality or over optimized. The site may have just been over some sort of threashold and you'd want to reel back that percentage.

-

I've had a look and it seems all PRWeb links are no follow these days - could Google still be filtering due to anchor text despite this?

We have around 30 articles on PRWeb with around 3-400 pickups on really low quality sites, all with the exact match low quality anchor text links but they are all no follow.

-

Hi - yes you'd want to clean up the links to that page, and ideally in this order or preference;

- Try to get exact anchors changed on pages where the link quality is ok, but the anchors are over optimized

- Try to get links removed entirely from low quality pages

- If #1 and #2 are not possible, than disavow the links.

- Ideally of course, you'd want to acquire some new trusted links to the page.

At minimum you'd want to see the page show up again for site: searches with the term in question. That to me would be a sign the filter being applied has been removed. I'm not sure how long this would take Google to do, it may depend on how successful you are at the steps above.

-

No manual actions are listed within Webmaster Tools; I'd checked previously as the fact pages are listed in site: searches but not site: searches containing the term in question (e.g. "sage erp x3 site:datel.info") made me think that search engines were removing results in some way.

Links crossed my mind - there is another page on the site has a large number of very spammy links (hundreds of blog comments with exact match anchor), which I disavowed around 2-3 weeks ago. This page also suffers the same issue of appearing in the site search but not if the term is mentioned and it doesn't rank for the term, though it used to.

As I mentioned in one of the other comments, the number of links that competitors have for these sort of terms is very low and PR Web seems to be something we've done that competitors haven't. How would I go about finding out if it the culprit? Disavowing PR Web links, or seeing if the articles can be amended to remove the exact match anchor text?

-

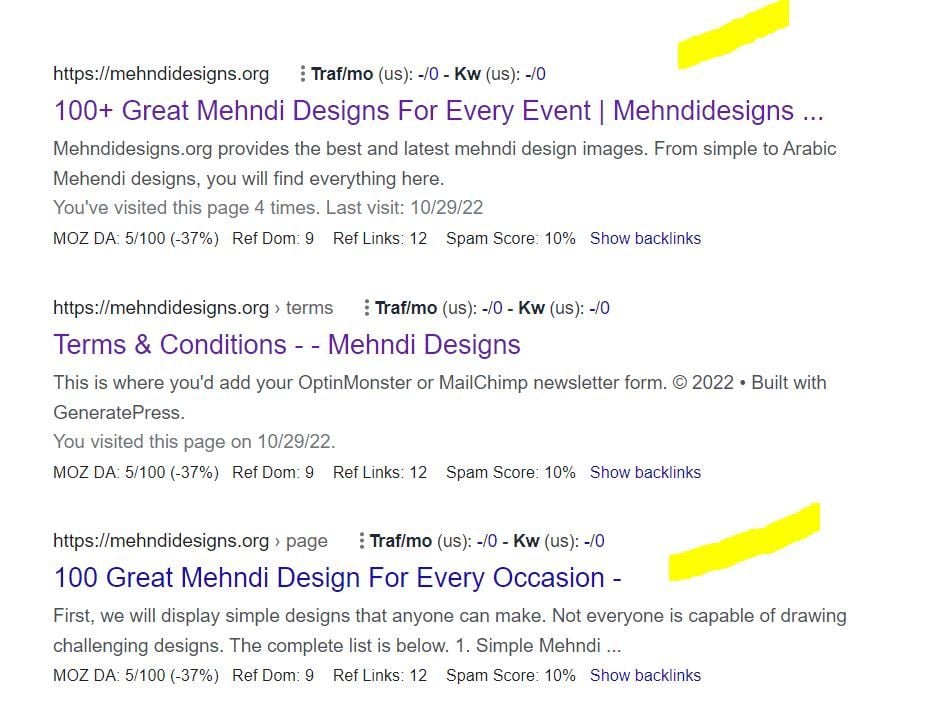

This doesn't feel like an on-page thing to me. Perhaps it's the exact match anchor links from Press Releases? See the open site explorer report. For example, here is one page with such a link: http://www.prweb.com/releases/2013/8/prweb10974324.htm

Google's algo could have taken targeted action on that one page due to the exact match anchor backlinks, and because many are from press releases.

Have you checked webmaster tools for a partial manual penalty?

The suppression of this page when using site: searches could further indicate a link based issue.

-

It's an area with very low volumes of links. Comparing to two competitors, looking at only external link equity passing links:

- We have links on two of our microsites (domain authority 11 and

and two press releases (DA 52 and 44)

and two press releases (DA 52 and 44) - One competitor only has links from another site in it's group (Domain Authority 12) and 301 redirects from another group site (Domain Authority 22) - no other links.

- Another competitor has guest blog links (Domain Authority 44) and links on one 3rd party site (DA 31)

The only significant difference in backlink profile is that Moz reports we have a vast amount of internal links. I believe this is due to the main navigation have 2 sublayers via dropdowns - available on every page of the site.

In addition, URL's aren't rewritten in anyway, so the same page can be accessed:

- with and without www

- With and without .aspx

- With and without the trailing slash

This creates a vast number of combinations which results in our 4-500 page site having 100,000 internal links.

Though the site is available with different links, all links to the site and all links on the site use www and .aspx with no trailing slash, and Google has only indexed these pages, there don't appear to be any duplicates with different combinations of URL.

Other than not being an ideal set up, and it is something I want to change (IT are looking at installing the IIS rewrite module), could this be causing any harm I'm not aware of?

- We have links on two of our microsites (domain authority 11 and

-

Thanks so far everyone - the responses so far have been helpful and have given me some areas to improve.

Unless they are related and I just don't know, the responses so far have addressed how to rank better and why the page may not be ranking for a term, however as far as I can see they don't address why the specific page isn't listed in the SERPs when you search for 'sage erp x3 site:datel.info', but it does appear for 'site:datel.info'.

The page has the keyword used a fair amount, but instead every single sup-page is listed - as are pages with only one use of the keyword in a paragraph of text. Until last week the keyword was used excessively on this page (over 35 uses of the keyword on a relatively short page) - which is why I wondered if Google may have suppressed it as it was being too spammy for that keyword? I've changed it now so if that were the case it should hopefully be changing soon, but I just wanted to know if that was a possible cause, or if there was something else that could be causing.

-

Thanks, I'm now in the process of changing this - for some reason keywords have been over abundant in every link and subpage, as you say it may be hard for google to select the correct page.

-

I checked but nothing that I can find unfortunately (or fortunately depending how you look at it).

-

Have you inspected the backlink profile for your page vs. the top ranking competitors? What are you seeing as far as relevance, quality and quantity of inbound links?

-

Grab a unique string of about 20 words from the page and search for it between quotes. Your page content may have been scraped and republished on other websites.

-

Thanks for the info. The page in discussion is as good or even better than many other pages trying to rank for the term, 'sage erp x3' from your website. I can clearly see an issue with too many pages from your website trying to rank for the term. My honest suggestion would be to please have the page titles and descriptions written optimally. Here is a general rule, one One page (internal page) should be optimized for one keyword/phrase.

Moreover if you look at the page titles of your website in Google results, Google actually modified the titles. This is a clear hint that its time to optimize your titles.

Here you go for more: http://searchenginewatch.com/article/2342232/Why-Google-Changes-Your-Titles-in-Search-Results

Its actually very confusing for a search engine line Google about which page from your website should rank high for the term, 'sage erp x3' as there are a many pages competing for the same term leading to keyword cannibalization.

Best regards,

Devanur Rafi

-

Looking at traffic, it looks like there was a drop in March 2013 but it's hard to pin point for certain as January 2013 was lower than January 2012, and there was a general downward trend until May 2013 at which point things have leveled out.

Unfortunately I wasn't working at the company at the time, so I'm not sure if any of this would correspond with levels of marketing activity.

The site in question is http://www.datel.info and the specific page I'm looking into at the moment is http://www.datel.info/our-solutions/sage-erp-x3.aspx though it isn't the only page affected. I just find it odd that it appears for the site search, but not the keyword & site search.

The site also has a fair amount of low quality links from comments on chinese blogs and forums due to the activity of an 'SEO Agency' 3 years ago. As I'm unable to get these removed, I've disavowed them in both Google and Bing webmaster tools. They were all anchor text rich, but none of them for this term or page.

-

Hi, have you seen a drop in the overall organic traffic from Google during the past year? However, if you are using Google Analytics for your site, you can try the following tool to check if there has been a hit due to the Panda updates:

http://www.barracuda-digital.co.uk/panguin-tool/

Without knowing the exact domain or the URLs, its hard to assess the exact issues. If you can share those details, we will be able to comment better.

Best regards,

Devanur Rafi

-

Hi Benita,

If you've been watching this keyword for a while and noticed the trend change, have you also noticed any of the other sites that were there with you (competitor sites within the top 10 when you were top 5) also change in rankings? How does their page copy look? Have they updated their content?

Have you looked at your copy on-page and seen that it appropriately addresses the theme of the keyword you're trying to be found for?

What is the ratio of links / images / navigation versus the text copy on the page? Does this seem natural to you when you look at the current top 5 sites / pages that rank?

Since the emergence of Panda & Penguin, the grey area previously allowed by Google to post repetitive content and questionable backlinks has significantly shrunk so if you now find you're badly off, chances are you might have moved from the grey area to the black...

my thoughts...

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Explore more categories

-

Chat with the community about the Moz tools.

-

Discuss the SEO process with fellow marketers

-

Discuss industry events, jobs, and news!

-

Chat about tactics outside of SEO

-

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

-