URL Inspector, Rich Results Tool, GSC unable to detect Logo inside Embedded schema

-

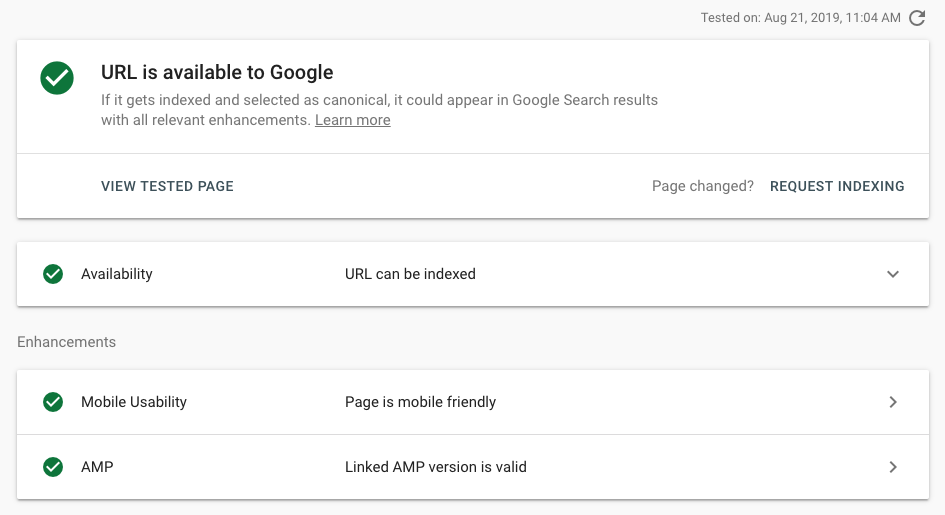

I work on a news site and we updated our Schema set up last week. Since then, valid Logo items are dropping like flies in Search Console. Both URL inspector & Rich Results test cannot seem to be able to detect Logo on articles. Is this a bug or can Googlebot really not see schema nested within other schema?Previously, we had both Organization and Article schema, separately, on all article pages (with Organization repeated inside publisher attribute). We removed the separate Organization, and now just have Article with Organization inside the publisher attribute. Code is valid in Structured Data testing tool but URL inspection etc. cannot detect it. Example: https://bit.ly/2TY9Bct Here is this page in URL inspector:

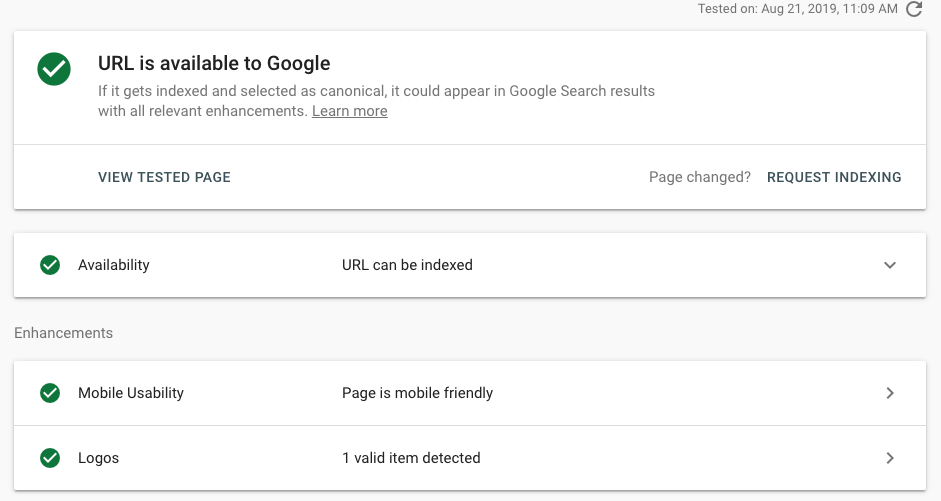

By comparison, we also have Organization schema (un-nested) on our homepage. Interestingly enough, the tools can detect that no problem. That's leading me to believe that either nested schema is unreadable by Googlebot OR that this is not an accurate representation of Googlebot and it's only unreadable by the testing tools. Here is the homepage in URL inspector:

By comparison, we also have Organization schema (un-nested) on our homepage. Interestingly enough, the tools can detect that no problem. That's leading me to believe that either nested schema is unreadable by Googlebot OR that this is not an accurate representation of Googlebot and it's only unreadable by the testing tools. Here is the homepage in URL inspector:  In pseudo-code, our OLD schema looked like this:

In pseudo-code, our OLD schema looked like this: The NEW schema set up has the same Article schema set up, but the separate script for Organization has been removed. We made the change to embed our schema for a couple reasons: first, because Google's best practices say that if multiple schemas are used, Google will choose the best one so it's better to just have one script; second, Google's codelabs tutorial for schema uses a nested structure to indicate hierarchy of relevancy to the page. My question is, does nesting schemas like this make it impossible for Googlebot to detect a schema type that's 2 or more levels deep? Or is this just a bug with the testing tools? -

Hey Hassan,

I can't see what you're seeing in GSC, but it looks like your logo is showing up on Google's actual search results. In my experience, GSC is still a little buggy, so if it's working fine in the wild, you're probably safe!

Best,

Kristina

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Browse Questions

Explore more categories

-

Moz Tools

Chat with the community about the Moz tools.

-

SEO Tactics

Discuss the SEO process with fellow marketers

-

Community

Discuss industry events, jobs, and news!

-

Digital Marketing

Chat about tactics outside of SEO

-

Research & Trends

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

Related Questions

-

Usage of keywords in URL

Hi everyone, I'm trying to optimize our website and I'm not sure what's ideal for our URL structure. We have two products: one of them is focused on B2C & the other one on B2B.

Technical SEO | | Klouwers

Our homepage is focused on the B2C product. For our B2B product, I'm not sure what's ideal. The URL for our 'homepage' of the B2B product is ourdomain.com/software. We have different target groups for our B2B software, and therefore different pages on our website. Which URL would be best to use for the keyword personal trainer software?

1. ourdomain.com/software/personal-trainer-software

2. ourdomain.com/software/personal-trainer0 -

URL Question: Is there any value for ecomm sites in having a reverse "breadcrumb" in the URL?

Wondering if there is any value for e-comm sites to feature a reverse breadcrumb like structure in the URL? For example: Example: https://www.grainger.com/category/anchor-bolts/anchors/fasteners/ecatalog/N-8j5?ssf=3&ssf=3 where we have a reverse categorization happening? with /level2-sub-cat/level1-sub-cat/category in the reverse order as to the actual location on the site. Category: Fasteners

Technical SEO | | ROI_DNA

Sub-Cat (level 1): Anchors

Sub-Cat (level 2): Anchor Bolts0 -

Migration to new URL structure

Hi guys, Just wondering what your processes are when moving a large site to a completely new URL structure on the same domain. Do you 301 everything from old page to new page, or are your more selective - i.e. only 301 pages that have a certain page authority, for example. Thanks!

Technical SEO | | A_Q0 -

Exact URL Match For Ranking

Has anyone else run into this issue? I have a competitor that purchases domain names for popular inner pages we are trying to rank for. We are trying to build a brand, our competitors have a lower domain authority but rank higher for inner pages in the serps with VERY little content, backlinks/seo work, they host a single page and do a re-direct to their main site. Would this be a good long term strategy? EX. We sell golf clubs our brand name is golfcity (Ex only) and we carry callaway clubs, our competitor is also building a brand but they purchased callawayclubs.net and do a re-direct. They rank on page one for keywords callaway clubs. If I do try to do this does one have an advantage over another? .com. net .org. because Ive seem them all used and rank on page 1. Thank you!!!

Technical SEO | | TP_Marketing0 -

Do keywords in url parameter count?

I have a client who is on an older ecommerce platform that does not allow url rewrites in anyway. It would cost a ton of money to custom dev a solution. Anyways right now they have set up a parameter on their product urls to at least get the keyword in there. My question is, will this keyword actually be counted since it is in a parameter? An example url is http://domain.com/Catalog.aspx?Level1=01&Level2=02&C=Product-name-here Does this 'product-name-here' count as having the keyword in the url according to google?

Technical SEO | | webfeatseo0 -

Duplicate content with same URL?

SEOmoz is saying that I have duplicate content on: http://www.XXXX.com/content.asp?ID=ID http://www.XXXX.com/CONTENT.ASP?ID=ID The only difference I see in the URL is that the "content.asp" is capitalized in the second URL. Should I be worried about this or is this an issue with the SEOmoz crawl? Thanks for any help. Mike

Technical SEO | | Mike.Goracke0 -

Google Webmaster tools error?

So I am trying to set the URL preference in google webmaster tools for my site. However when I try to save it it tells me to verify that I own the site. I have already done this so where can I go to verify I own the site exactly? Maybe I am wrong and I have not done this already but even on the homepage of webmaster tools I don't see an option to "verify".

Technical SEO | | ENSO0 -

Crawl Errors In Webmaster Tools

Hi Guys, Searched the web in an answer to the importance of crawl errors in Webmaster tools but keep coming up with different answers. I have been working on a clients site for the last two months and (just completed one months of link bulding), however seems I have inherited issues I wasn't aware of from the previous guy that did the site. The site is currently at page 6 for the keyphrase 'boiler spares' with a keyword rich domain and a good onpage plan. Over the last couple of weeks he has been as high as page 4, only to be pushed back to page 8 and now settled at page 6. The only issue I can seem to find with the site in webmaster tools is crawl errors here are the stats:- In sitemaps : 123 Not Found : 2,079 Restricted by robots.txt 1 Unreachable: 2 I have read that ecommerce sites can often give off false negatives in terms of crawl errors from Google, however, these not found crawl errors are being linked from pages within the site. How have others solved the issue of crawl errors on ecommerce sites? could this be the reason for the bouncing round in the rankings or is it just a competitive niche and I need to be patient? Kind Regards Neil

Technical SEO | | optimiz10