Blogs Not Getting Indexed Intermittently - Why?

-

Over the past 5 months many of our clients are having indexing issues for their blog posts.

A blog from 5 months ago could be indexed, and a blog from 1 month ago could be indexed but blogs from 4, 3 and 2 months ago aren't indexed.It isn't consistent and there is not commonality across all of these clients that would point to why this is happening.

We've checked sitemap, robots, canonical issues, internal linking, combed through Search Console, run Moz reports, run SEM Rush reports (sorry Moz), but can't find anything.

We are now manually submitting URLs to be indexed to try and ensure they get into the index.

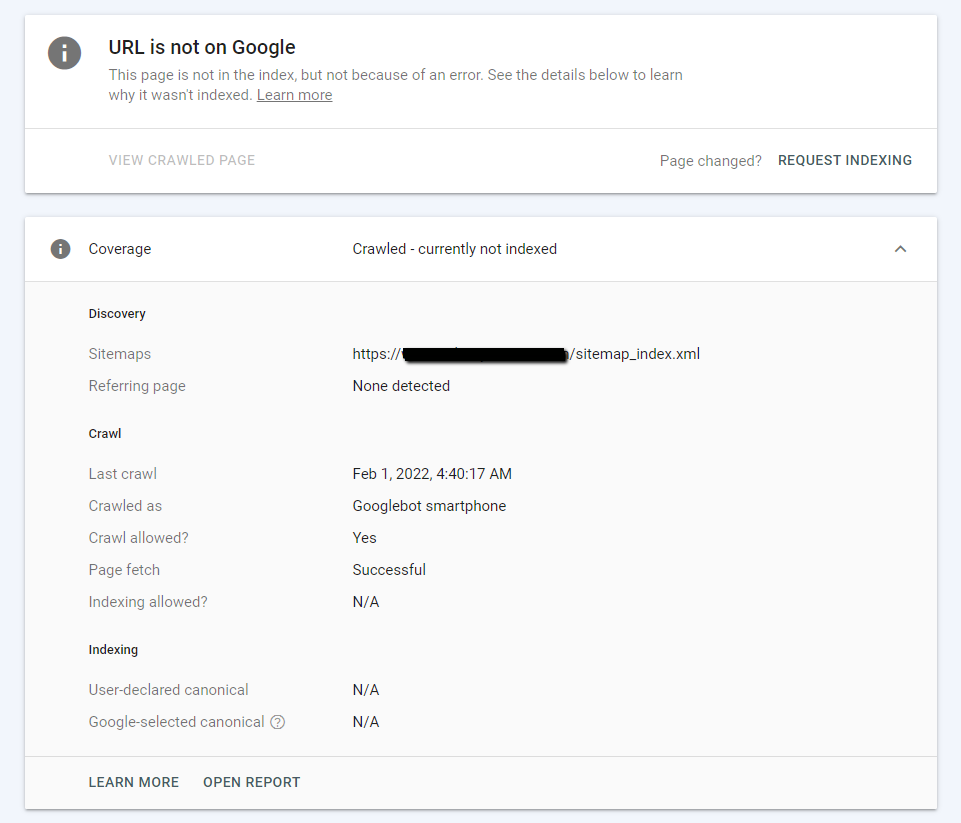

Search console reports for many of the URLs will show that the blog has been fetched and crawled, but not indexed (with no errors).

In some cases we find that the blog paginated pages (i.e. blog/page/2 , blog/page/3 , etc.) are getting indexed but not the blogs themselves.

There aren't any nofollow tags on the links going to the blogs either.

Any ideas?

*I've added a screenshot of one of the URL inspection reports from Search Console

-

Very interesting. I never thought of deleting a URL and creating a new one (a better one) and then creating a successful indexing. I'll have to keep that in mind if I need an important URL indexed.

-

@johnbracamontes Hello John, I would recommend you to verify if the content of these articles is similar to others in your blog, I would recommend you to download the featured image and add a description related to the title of your article, in the same way to verify that you only have an h1 in a beginning of the article and modify a little the titles h2 that you have..

-

Google has been much more picky about which pages they index lately, apart from suffering some indexing bugs. So yeah, indexing can be a real pain.

According to Google, when they crawl but do not index a blog post, it is probably due to content quality issues, either from that post or the website overall.

Based on what's worked for us, I'd suggest to substantially modify the content of those posts (adding content, images, etc), and then manually resubmitting them. If that doesn't index them, then delete the post, and publish the content in a new post URL —then submit it.

Hope that helps.

-

I was facing the same problem again and again. I changed the URL and resubmitted it and it worked. I changed the URL again to the previous one and resubmitted it. It is now indexed on google.

-

-

Nothing?

Would love to hear any thoughts.

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Browse Questions

Explore more categories

-

Moz Tools

Chat with the community about the Moz tools.

-

SEO Tactics

Discuss the SEO process with fellow marketers

-

Community

Discuss industry events, jobs, and news!

-

Digital Marketing

Chat about tactics outside of SEO

-

Research & Trends

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

Related Questions

-

Google Index Issue

2 months ago, I registered a domain named www.nextheadphone.com I had a plan to learn SEO and create a affiliate blog site. In my website I had 3 types of content. Informative Articles Headphone Review articles Product Comparision Review articles Problem is, Google does not index my informative articles. I dont know the reasons. https://www.nextheadphone.com/benefits-of-noise-cancelling-headphones/

Content Development | | NextHeadphone

https://www.nextheadphone.com/noise-cancelling-headphones-protect-hearing/ Is there anyone who can take a look and find the issues why google is not indexing my articles? I will be waiting for your reply0 -

Can't get Google to index our site although all seems very good

Hi there, I am having issues getting our new site, https://vintners.co indexed by Google although it seems all technical and content requirements are well in place for it. In the past, I had way poorer websites running with very bad setups and performance indexed faster. What's concerning me, among others, is that the crawler of Google comes from time to time when looking on Google Search Console but does not seem to make progress or to even follow any link and the evolution does not seem to do what google says in GSC help. For instance, our sitemap.xml was submitted, for a few days, it seemed like it had an impact as many pages were then visible in the coverage report, showing them as "detected but not yet indexed" and now, they disappeared from the coverage report, it's like if it was not detected any more. Anybody has any advice to speed up or accelerate the indexing of a new website like ours? It's been launched since now almost two months and I was expected, at least on some core keywords, to quickly get indexed.

Technical SEO | | rolandvintners1 -

Unsolved Why did I stop ranking on a keyword and how will I rank on it again?

I often see in my campaigns, that keywords which ranked on a page between spot 1 to 5 on the SERP stop being ranked on that respective page, causing the website to be in the 5th page or worse on Google. I also see that the keyword is not linked to a page anymore. What causes this to happen and how can I solve this from happening in the future? Capture.PNG

Moz Pro | | Ginovdw0 -

URL Indexed But Not Submitted to Sitemap

Hi guys, In Google's webmaster tool it says that the URL has been indexed but not submitted to the sitemap. Is it necessary that the URL be submitted to the sitemap if it has already been indexed? Appreciate your help with this. Mark

Technical SEO | | marktheshark100 -

Issues with getting a web page indexed

Hello friends, I am finding it difficult to get the following page indexed on search: http://www.niyati.sg/mobile-app-cost.htm It was uploaded over two weeks back. For indexing and trouble shooting, we have already done the following activities: The page is hyperlinked from the site's inner pages and few external websites and Google+ Submitted to Google (through the Submit URL option) Used the 'Fetch and Render' and 'Submit to index' options on Search Console (WMT) Added the URL on both HTML and XML Sitemaps Checked for any crawl errors or Google penalty (page and site level) on Search Console Checked Meta tags, Robots.txt and .htaccess files for any blocking Any idea what may have gone wrong? Thanks in advance!

Technical SEO | | RameshNair

Ramesh Nair0 -

How should i knows google to indexed my new pages ?

I have added many products in my ecommerce site but most of the google still not indexed yet. I already submitted sitemap a month ago but indexed process was very slow. Is there anyway to know the google to indexed my products or pages immediately. I can do ping but always doing ping is not the good idea. Any more suggestions ?

Technical SEO | | chandubaba1 -

Multilingual blogs and site structure

Hi everyone, I have a question about multilingual blogs and site structure. Right now, we have the typical subfolder localization structure. ex: domain.com/page (english site) domain.com/ja/page (japanese site) However, the blog is a slightly more complicated. We'd like to have english posts available in other languages (as many of our users are bilinguals). The current structure suggests we use a typical domain.com/blog or domain.com/ja/blog format, but we have issues if a Japanese (logged in) user wants to view an English page. domain.com/blog/article would redirect them to domain.com/ja/blog/article thus 404-ing the user if the post doesn't exist in the alternate language. One suggestion (that I have seen on sites such as etsy/spotify is to add a /en/ to the blog area: ex domain.com/en/blog domain.com/ja/blog Would this be the correct way to avoid this issue? I know we could technically work around the 404 issue, but I don't want to create duplicate posts in /ja/ that are in English or visa versa. Would it affect the rest of the site if we use a /en/ subfolder just for the blog? Another option is to use: domain.com/blog/en domain.com/blog/ja but I'm not sure if this alternative is better. Any help would be appreciated!

Technical SEO | | Seiyav0 -

Site being indexed by Google before it has launched

We are currently coming towards the end of a site migration, and are at the final stage of testing redirects etc. However, to our horror we've just discovered Google has started indexing the new site. Any ideas on how this could have happened? I have most recently asked for robots.txt to exclude anything with a certain parameter in URL. Is there a chance this, wrongly implemented, could have caused this?

Technical SEO | | Sayers0