After more than 13 years, and tens of thousands of questions, Moz Q&A closed on 12th December 2024. Whilst we’re not completely removing the content - many posts will still be possible to view - we have locked both new posts and new replies. More details here.

Google keeps marking different pages as duplicates

-

My website has many pages like this:

mywebsite/company1/valuation

mywebsite/company2/valuation

mywebsite/company3/valuation

mywebsite/company4/valuation

...

These pages describe the valuation of each company.

These pages were never identical but initially, I included a few generic paragraphs like what is valuation, what is a valuation model, etc... in all the pages so some parts of these pages' content were identical.

Google marked many of these pages as duplicated (in Google Search Console) so I modified the content of these pages: I removed those generic paragraphs and added other information that is unique to each company. As a result, these pages are extremely different from each other now and have little similarities.

Although it has been more than 1 month since I made the modification, Google still marks the majority of these pages as duplicates, even though Google has already crawled their new modified version. I wonder whether there is anything else I can do in this situation?

Thanks

-

Google may mark different pages as duplicates if they contain very similar or identical content. This can happen due to issues such as duplicate metadata, URL parameters, or syndicated content. To address this, ensure each page has unique and valuable content, use canonical tags when appropriate, and manage URL parameters in Google Search Console.

-

Yes, there are a few other things you can do if Google is still marking your pages as duplicates after you have modified them to be unique:

-

Check your canonical tags. Canonical tags tell Google which version of a page is the preferred one to index. If you have canonical tags in place and they are pointing to the correct pages, then Google should eventually recognize that the duplicate pages are not actually duplicates.

-

Use the URL parameter tool in Google Search Console. This tool allows you to tell Google which URL parameters it should treat as unique and which ones it should ignore. This can be helpful if you have pages with similar content but different URL parameters, such as pages for different product categories or pages with different sorting options.

-

Request a recrawl of your website. You can do this in Google Search Console. Once Google has recrawled your website, it will be able to see the new, modified versions of your pages.

If you have done all of the above and Google is still marking your pages as duplicates, then you may need to contact Google Support for assistance.

-

-

If Google is marking different pages on your website as duplicates, it can negatively impact your website's search engine rankings. Here are some common reasons why Google may be doing this and steps you can take to address the issue:

Duplicate Content: Google's algorithms are designed to filter out duplicate content from search results. Ensure that your website does not have identical or near-identical content on multiple pages. Each page should offer unique and valuable content to users.

URL Parameters: If your website uses URL parameters for sorting, filtering, or tracking purposes, Google may interpret these variations as duplicate content. Use canonical tags or the URL parameter tool in Google Search Console to specify which version of the URL you want to be indexed.

Pagination: For websites with paginated content (e.g., product listings, blog archives), ensure that you implement rel="next" and rel="prev" tags to indicate the sequence of pages. This helps Google understand that the pages are part of a series and not duplicates.

www vs. non-www: Make sure you have a preferred domain (e.g., www.example.com or example.com) and set up 301 redirects to the preferred version. Google may treat www and non-www versions as separate pages with duplicate content.

HTTP vs. HTTPS: Ensure that your website uses secure HTTPS. Google may view HTTP and HTTPS versions of the same page as duplicates. Implement 301 redirects from HTTP to HTTPS to resolve this.

Mobile and Desktop Versions: If you have separate mobile and desktop versions of your site (e.g., responsive design or m.example.com), use rel="alternate" and rel="canonical" tags to specify the relationship between the two versions.

Thin or Low-Quality Content: Pages with little or low-quality content may be flagged as duplicates. Improve the content on such pages to provide unique value to users.

Canonical Tags: Implement canonical tags correctly to indicate the preferred version of a page when there are multiple versions with similar content.

XML Sitemap: Ensure that your XML sitemap is up-to-date and accurately reflects your website's structure. Submit it to Google Search Console.

Avoid Scraped Content: Ensure that your content is original and not scraped or copied from other websites. Google penalizes sites with duplicate or plagiarized content.

Check for Technical Errors: Use Google Search Console to check for crawl errors or other technical issues that might be causing duplicate content problems.

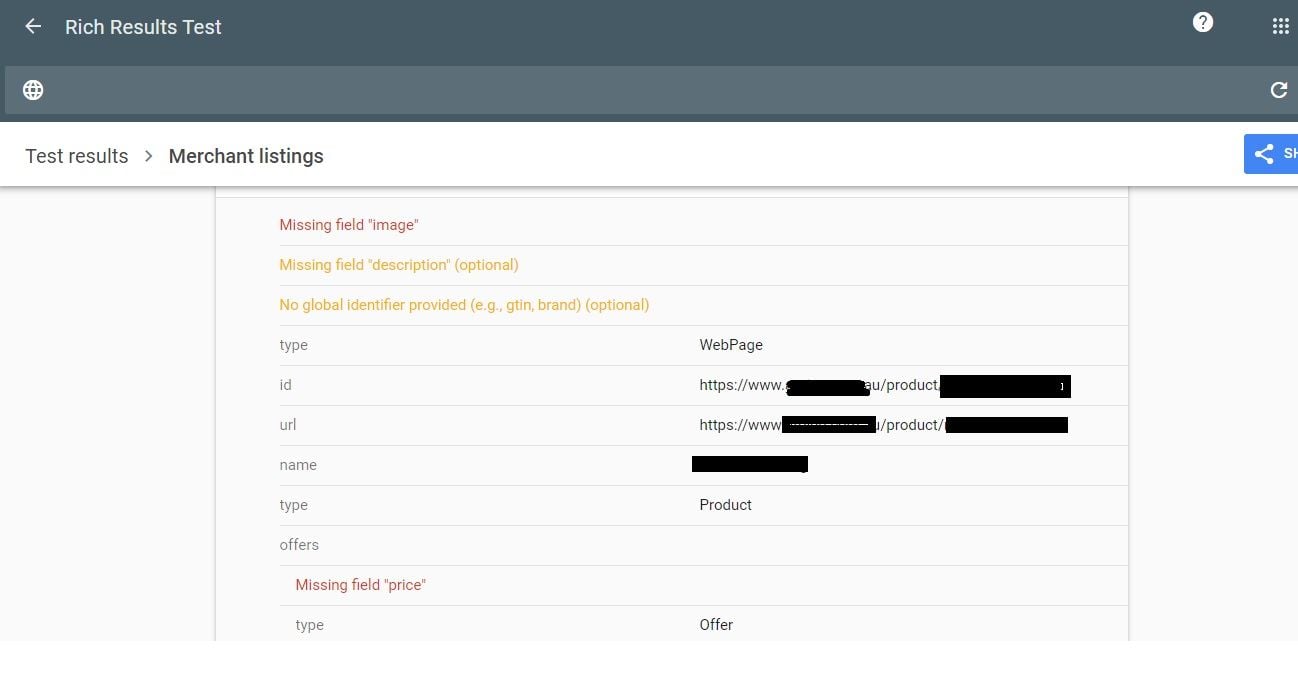

Structured Data: Ensure that your structured data (schema markup) is correctly implemented on your pages. Incorrectly structured data can confuse search engines.

Regularly monitor Google Search Console for any duplicate content issues and take prompt action to address them. It's essential to provide unique and valuable content to your website visitors while ensuring that search engines can correctly index and rank your pages.

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Explore more categories

-

Chat with the community about the Moz tools.

-

Discuss the SEO process with fellow marketers

-

Discuss industry events, jobs, and news!

-

Chat about tactics outside of SEO

-

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

-