After more than 13 years, and tens of thousands of questions, Moz Q&A closed on 12th December 2024. Whilst we’re not completely removing the content - many posts will still be possible to view - we have locked both new posts and new replies. More details here.

What Tools Should I Use To Investigate Damage to my website

-

I would like to know what tools I should use and how to investigate damage to my website in2town.co.uk I hired a person to do some work to my website but they damaged it. That person was on a freelance platform and was removed because of all the complaints made about them. They also put in backdoors on websites including mine and added content.

I also had a second problem where my content was being stolen.

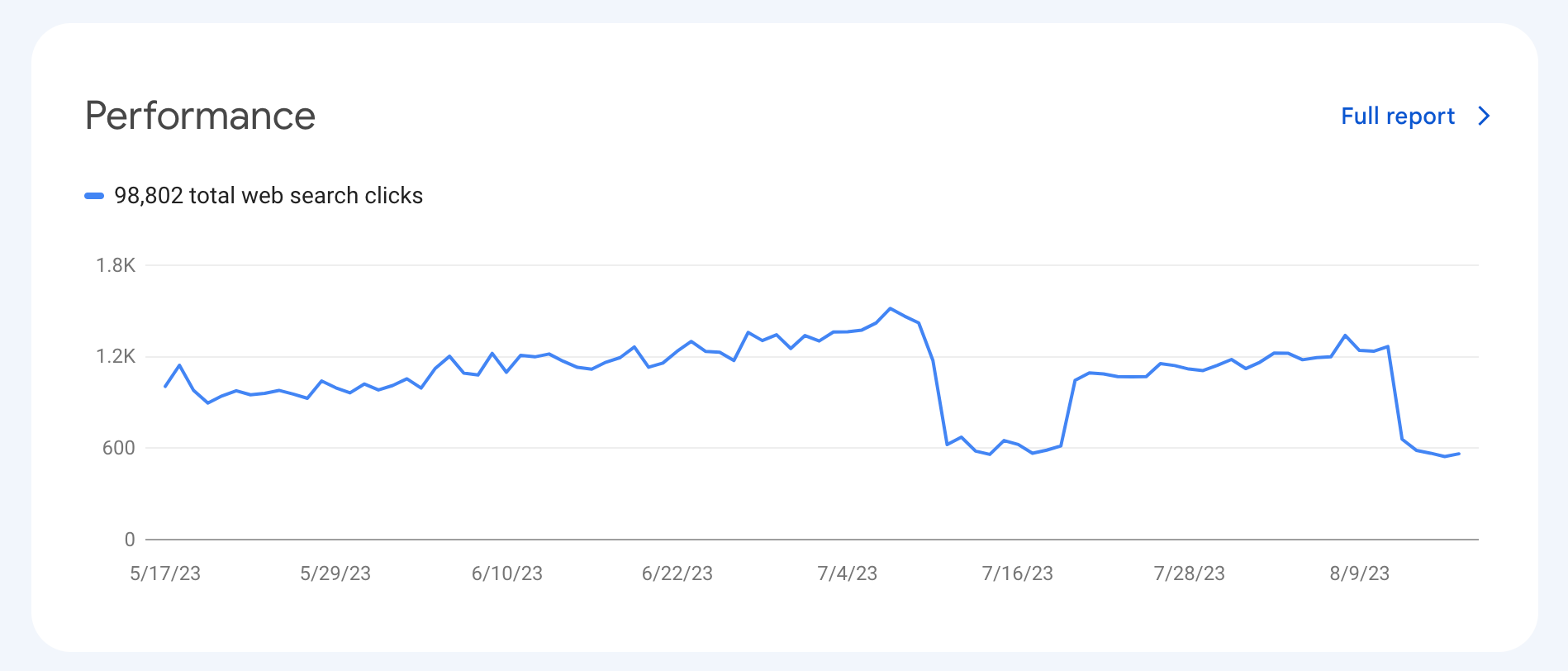

My site always did well and had lots of keywords in the top five and ten, but now they are not even in the top 200. This happened in January and feb.

When I write unique articles, they are not showing in Google and need to find what the problem is and how to fix it. Can anyone please help

-

Repairing website damage requires a structured approach. Start by assessing any issues caused by the freelancer using tools like Wordfence for WordPress to detect backdoors or malicious changes. It’s possible Google penalized you for whatever work the freelancer did. You might need to disavow toxic links they built, for example, or address other issues. Tools such as Google Alerts can help identify content duplicates for action.

For future reference, regular monitoring helps prevent unauthorized changes and stay informed about industry trends. If you monitor a web page for changes, you will ensure no unauthorized adjustments are made on your site. There are several ways to approach this, and several tools you can use; some will notify you when your webpage changes. Monitoring competitors for content trends can also guide your strategy and reveal potential areas for improvement.

-

use google search console and screaming frog

-

The best tool that i used to diagnose problems in my office interior designs service based website is the Google search console GSC. You can also use screaming frog or also use moz to analyze and solve issues

-

Page Freezer: Instantly preserve web pages and social media profiles to capture evidence of website damage

-

To investigate damage to your website, you should consider using tools like Google Search Console for monitoring search performance and detecting issues, website security scanners like Word fence to check for malware or vulnerabilities, and website auditing tools such as SEMrush or Screaming Frog for comprehensive analysis of technical SEO issues and website health.

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Explore more categories

-

Chat with the community about the Moz tools.

-

Discuss the SEO process with fellow marketers

-

Discuss industry events, jobs, and news!

-

Chat about tactics outside of SEO

-

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

-